Jump To: CANNON | FASSE | KEMPNER

Description

Researchers can take advantage of the scale of the FASRC cluster by setting up workflows to split many different tasks into large batches, which are scheduled across the cluster at the same time. Most clusters are made from commercial hardware, so do note that the performance of an individual single-threaded computation job may not be any faster than a local new workstation/laptop with new CPU and flash (SSD) storage; cluster computing is all about scale. Doing computations at scale allows a researcher to test many different variables at once, thereby shorter time to outcomes, and also provides the ability to ask larger, more complex problems (I.e. larger data sets, longer simulation time, more degrees of freedom, etc.)

Please Note: The FASRC research clusters cannot host class accounts and academics due to FERPA and other requirements. Please work with University RC and ATG to set up your course on the Academic Cluster and Canvas. See: https://atg.fas.harvard.edu/ondemand

Key Features and Benefits

General access to the cluster is provided via ssh (secure shell, SHA-256 or better encryption) to a set of login servers, which is the staging point for submitting jobs. Most of the time these are on the public network with multi-factor authentication. These hosts are not designed for compute intensive tasks as many users (100s) are logged in simultaneously. As well, graphical or web-based login may be provided (e.g. Open OnDemand, NoMachine, or Science Gateway like Galaxy).

Discrete units of computational tasks are sent to a job scheduler, normally defined within a job script.

In a cluster, these servers are dedicated to performing computations, as opposed to storing data or databases. They normally contain a larger number of cores and memory in comparison to a workstation/laptop, and have a high-performance network (10 Gbps or more) connecting them together. They may also have specialized hardware to off-load computations like Graphical Processing Units (GPUs) or Field Programmable Gate Arrays (FPGAs).

In order to maximize the utilization of the cluster hardware, jobs are submitted in batches to the queuing system (aka “the scheduler”). This system manages the state of all the resources on the compute nodes, schedules jobs based upon requirements provided by the user, and tracks usage. There are a set of commands that users use to submit jobs, cancel jobs, query current state of jobs, and query the historical usage of jobs.

A scheduler is used to disperse jobs on the cluster in some fair and meaningful way when there is contention for resources. There are two main scheduling algorithms that most schedulers use. FIFO (first in first out) and is good for smaller, more homogenous user communities. Fairshare, is designed to give each grouping (whether lab group or individual user) equal access to resources over time. Therefore, this uses the historical usage to gauge how much resources to allocate. In Fairshare, each user has an allocated amount of shares of the whole cluster, which they use when they run jobs and are based upon the amount of resources they use for a given time. Their shares accumulate back over time.

Jobs go through various states during the course of using the queuing system. Upon submission, they are pending until allocated resources to run, then running, then completed. If the job did not complete successfully it would have a failed state, or it could have been terminated by user or admin and have the state canceled. A job in the suspended state is paused, whereby the process is stopped, and the memory remains allocated, and this job could be resumed. These states are displayed when a user queries the status of a job.

Each job is assigned a priority in the queue to order them in line for next dispatch. A job with high priority would be at the top of the list. The priority value normally is made up from the sum of values that include Fairshare, size of job (cores, memory, time limit), queue, and age of pending job.

These are a logical grouping of computing resources that could be grouped for use of similar hardware or to separate accesses. A job must select a queue to submit to (or the default will be automatically selected). Queues can be set up to allow for compute nodes to be shared among multiple jobs or dedicated to single jobs in an exclusive manner. Also, queues can have a relative priority for jobs to be scheduled from.

Each queuing system requires that you specify resources for each job (or it uses the defaults). These basic requirements are the number of processing cores, amount of memory, and time/duration of the job.

There are three basic modes of doing computations in parallel. 1) Tightly coupled, where a collection of processes on different nodes are working in tandem on the same task passing information through the network usually via MPI (message passing interface) with RDMA (remote direct memory access) to each other's process memory segment. This relies on a low-latency network and run-time libraries to be added to the code. 2) Threaded, where a collection of processes on a single node are sharing the same memory space via OpenMP (or parallel in python or doParallel in R). 3) Loosely coupled, where the tasks are largely independent and controlled by an external script to submit tasks in a loop and sometimes analyze the output and resubmit the next iteration. In this sense, it is pleasantly parallel, as it doesn’t require updating the code with MPI or OpenMP library calls, but just orchestrates the tasks remotely.

Not all computations need be batched with job scripts, a user can submit a job that allows direct access to a compute node. This is great for compiling code, debugging workflows, or running short calculations interactively. As well, most clusters provide an interface that allows for a Graphical User Interface to be displayed over the network (I.e. X11 forwarding, VNC, Web socket, etc.). Interactive sessions are not meant to be long running, as the interactive nature would require input from the user and if the user isn’t present, then the resources will go unused for some time, which defeats the purpose of batch scheduling.

FASRC offers resources and training regarding storage, data management, data science, and software support for faculty, staff, and cores. To learn more visit rc.fas.harvard.ed

Service Expectations and Limits:

Cluster-computing is not intended to be a larger version of a single machine. Scaling computations to take advantage of a cluster may require changes to how the computation is configured and executed. As cluster-computing is a shared resource, many factors affect performance including other computations on the same compute nodes competing for memory, storage, and network. In addition, due to the nature of research that is being performed, it isn’t always well understood by the end user how scaling out their computations on a cluster will differ from performing them on a single device in terms of resource requirements (cores, memory, time, data storage), as these are managed in a different way on a single device. It is always best for researchers to test smaller data sets and batches of tasks before submitting hundreds or thousands.

At FASRC, availability, uptime, and backup schedule is provided as best effort with staff that do not have rotating 24/7/365 shifts.

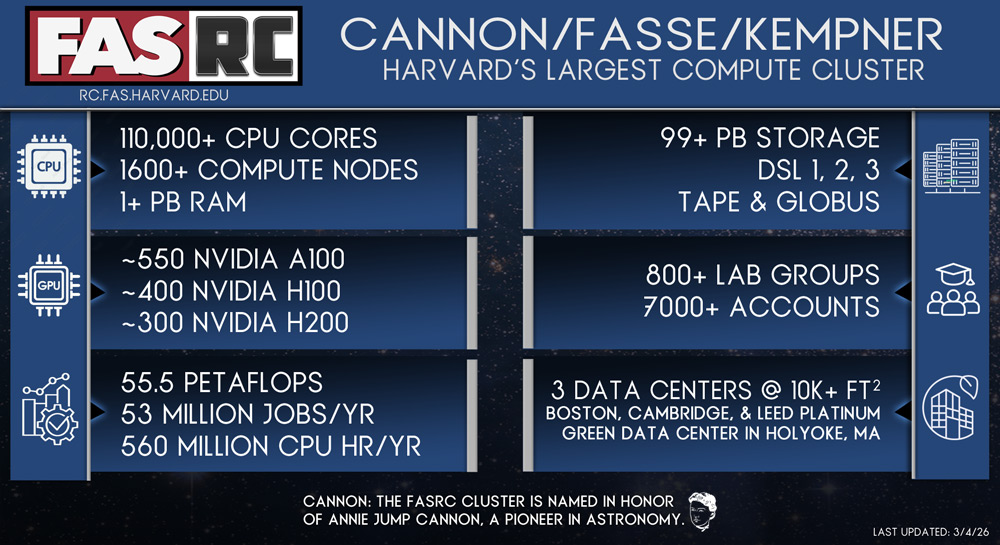

Cannon |

Queuing System: SLURM

Security Levels: Level 1 (DSL1), Level 2 (DSL2)

Shared: Resources are provided by FASRC and are distributed evenly amongst the various lab groups.

- Access: All PI groups.

- Scheduling: Fairshare, see https://www.rc.fas.harvard.edu/fairshare/ for more details.

- Queue/Partition names: See our partitions table at Running Job/Partitions

- Features: See our partitions table at Running Job/Partitions

- Cost: Billing is done at the school level so please consult your school administrator.

Dedicated: Resources are provided by Centers, Departments, or PIs

- Access: Restricted PI groups from Center/Department/Lab.

- Scheduling: Fairshare, see https://www.rc.fas.harvard.edu/fairshare/ for more details.

- Queue/Partition name: pi_name or center name (e.g. huce)

- Features: time limits vary, non-exclusive nodes, multi-node parallel

- Cost: Billing is done at the school level so please consult your school administrator.

Backfill: Users have the ability to utilize unused parts of the dedicated queues.

- Access: All PI groups.

- Scheduling: Fairshare, see https://www.rc.fas.harvard.edu/fairshare/ for more details.

- Queue/Partition name: serial_requeue, gpu_requeue

- Backfilled by Kempner nodes

- Features: See our partitions table at Running Job/Partitions

- Cost: Billing is done at the school level so please consult your school administrator.

As is customary with high-performance computing clusters home directories are provided to each individual user and scratch/temp space is provided to each PI Group.

Home directories - Isilon

- Features: Regular performance, snapshot, DR copy, network attached to cluster (NFS), quota, SMB

- Mount point: /home/username

- Quota: 100 GB

- Cost: Included as part of Cluster Computing

Scratch - Vast

- Features: High-performance, temporary, single copy, network attached to cluster, quota (files + size), 90 day retention policy.

- Mount point: /n/netscratch/pi_lab

- Quota: 50 TB max per Lab

- Cost: Included as part of Cluster Computing

All Research Facilitation services pertaining to the use of the cluster are included in the cost.

Available to:

All PIs from a supported Harvard School with an active FASRC account. For more information see Account Requests

FAS Secure Environment (FASSE) |

Queuing System: SLURM

Security Levels: Level 3 (DSL3)

See: https://docs.rc.fas.harvard.edu/kb/fasse/

Shared: Resources are provided by FASRC and are distributed evenly amongst the various lab groups.

- Access: All PI groups.

- Scheduling: Fairshare, see https://www.rc.fas.harvard.edu/fairshare/ for more details.

- Queue/Partition name: shared, general, bigmem, test, gpu_test

- Features: See our partitions table at Running Job/Partitions

- Due to segregated nature, does not backfill to other partitions/clusters

- Cost: Billing is done per project at the school level. See Billing FAQ for more details.

Kempner |

Queuing System: SLURM

Security Levels: Level 1 (DSL1), Level 2 (DSL2)

Shared: Resources are provided by FASRC and are distributed amongst the various Kempner groups.

- Access: Kempner PI groups.

- Scheduling: Fairshare, see https://www.rc.fas.harvard.edu/fairshare/ for more details.

- Queue/Partition name: CPU and GPU-specific partitions as well as all Cannon partitions (except Kempner/HMS labs who can only use Kempner partitions). See our partitions table at Running Job/Partitions

- Backfills idle cycles into the Cannon cluster

- Features: Exclusive and non-exclusive nodes, multi-node parallel

Please note that some resources such as GPU queues may have different limits.

- Cost: Billing is done at the school level.