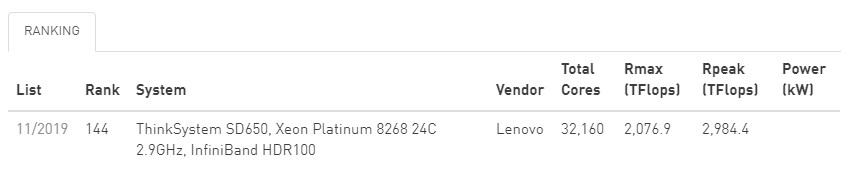

The November 2019 Top 500 List has just added Cannon, and we're very pleased to say we've ranked #144 overall and # 7 on the US academic list. We and the Harvard FAS are very proud to have Cannon in such a prominent position in the list. It demonstrates Harvard's commitment to pushing the envelope to provide its researchers with cutting edge resources to enhance and accelerate their work. We look forward to working with our user community to see where they take Cannon and what new and exciting research results.

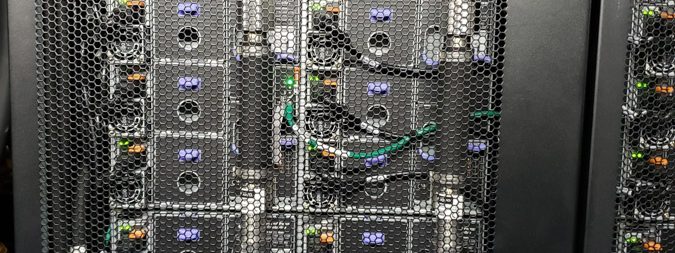

Cannon employs a complex, yet very robust and fault tolerant, liquid cooling system developed by Lenovo in order to more efficiently cool each node. This solution tackles two problems: It reduces the reliance on inefficient, but still necessary, heat removal via air handling, and it allows the CPUs to run at higher clock speeds without adding additional air cooling. The latter is key to our reaching such a significant spot on the Top 500 List without having to utilize more cores.

For us, this is especially effective as MGHPCC, our LEED Platinum green data center, does not cool the entire room as computer rooms have done historically. Instead, the ambient temperature runs around 80 degrees Fahrenheit and each back-to-back row uses hot aisle containment so that heat is sequestered at the rear of the racks and extracted from there. The Cannon row is noticeably cooler than others aisles, which is good not just for the facility's air handling, but for staff and the overall life and health of the machines. For the time-being, Cannon's water loop is a closed system, but in future it and other systems could be tied into the facility to create a facility-wide liquid heat exchange system.

You may hear us talk about Cannon as the aggregate of all cores tied to our scheduler as we tend to adopt one name for everything. That number puts us at over 100,000 cores for the Cannon 'collective'. But the core of Cannon, the machine that's in the Top 500 or 'Cannon actual', is comprised of 32,160 cores of Intel's new Cascade Lake Xeon 8268 CPUs housed, two nodes per, in 670 of Lenovo's water-cooled SD650 chassis. These 670 nodes, and 1.286 TB of RAM, are what gave us a score of 2,076.89 TFLOP/s (that's 2.076 PetaFLOPs) in Linpack performance and put us on the Top 500.

Lenovo SD650 dual-node chassis with cooling system highlighted.

Lenovo SD650 dual-node chassis with cooling system highlighted.

You can also read more about Cannon and the Project Everyscale council created by Lenovo and Intel, and which we are a part of, on HPC Wire:

Lenovo and Intel Fuel Harvard University’s First Liquid-Cooled Supercomputer, Create Council to Drive Broad Adoption of Exascale Technology