Monthly maintenance October 4th, 2021 7am-11am

Regular monthly maintenance will take place Monday October 4th, 2021 7-11am

NOTICES

- Update to fairshare mechanism to help break ties within lab groups. No discernable change to the majority of cluster users. This small adjustment is weighted by a users individual usage, breaking ties internal to a group. https://docs.rc.fas.harvard.edu/kb/fairshare/#Nice

- New cluster hardware comes online Sept 30th, increasing overall computational power by 25%

- new block of 18 water cooled GPU nodes, each with NVidia SXM4 (4x A100 cards)

- new block of 36 water cooled “big mem” compute nodes, each with 2x Intel 8358 processors (64 cores total) and 512 GB of RAM

- retirement of less-efficient gear including most AMD compute and K80 GPUs

- gratis fairshare has been increased to 120 for all labs

See the blog post from Scott (or Sept 13th email) for more details: https://www.rc.fas.harvard.edu/blog/2021-cluster-upgrade/ - Holyscratch cleanup will remove many retention exceptions - Owners of that data have been notified

- Training sessions available: https://www.rc.fas.harvard.edu/upcoming-training/

- New Storage Service Center launched FY22 (July 1st 2021). See Billing FAQ https://www.rc.fas.harvard.edu/policy/billing-faq/ and list of storage tiers https://www.rc.fas.harvard.edu/services/data-storage/. Individual PIs will be contacted about setting up their billing.

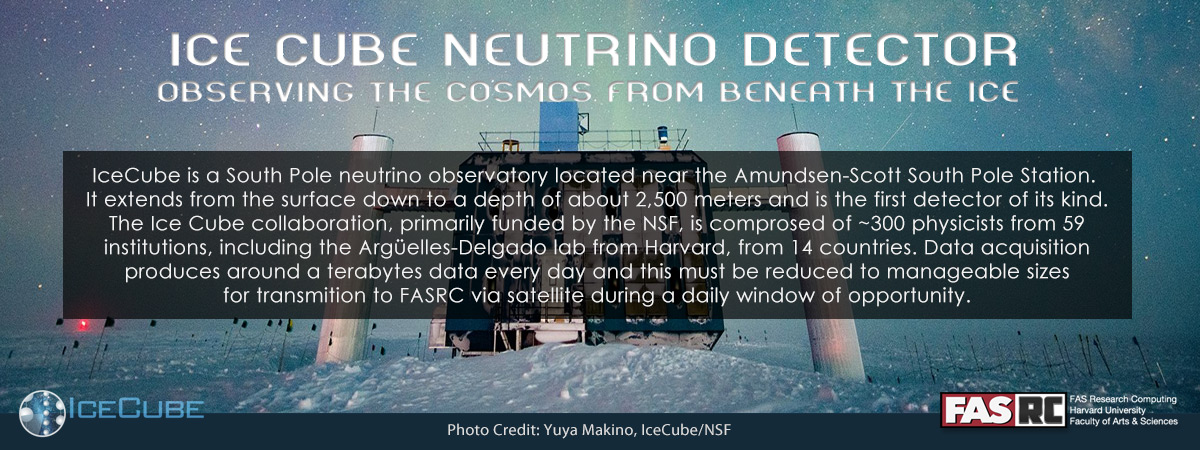

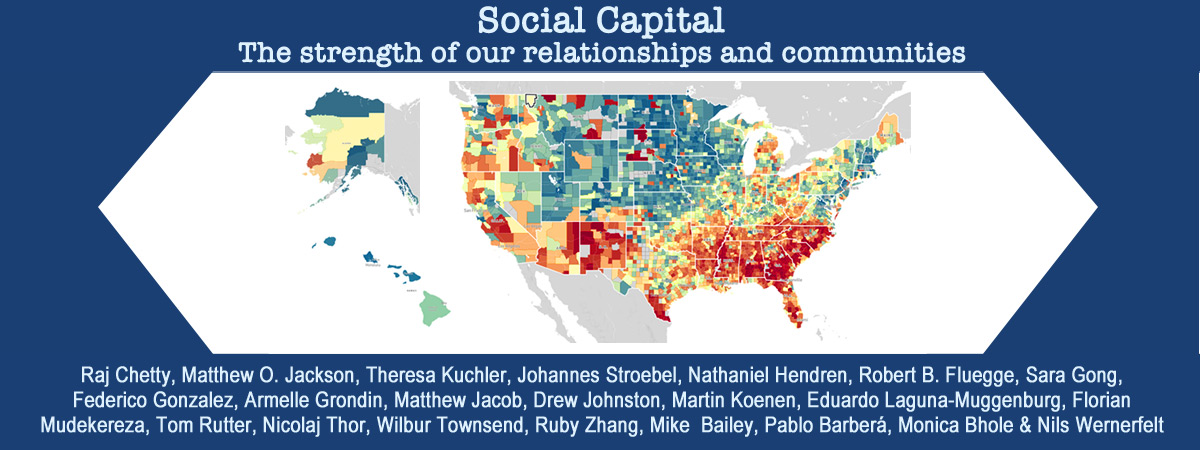

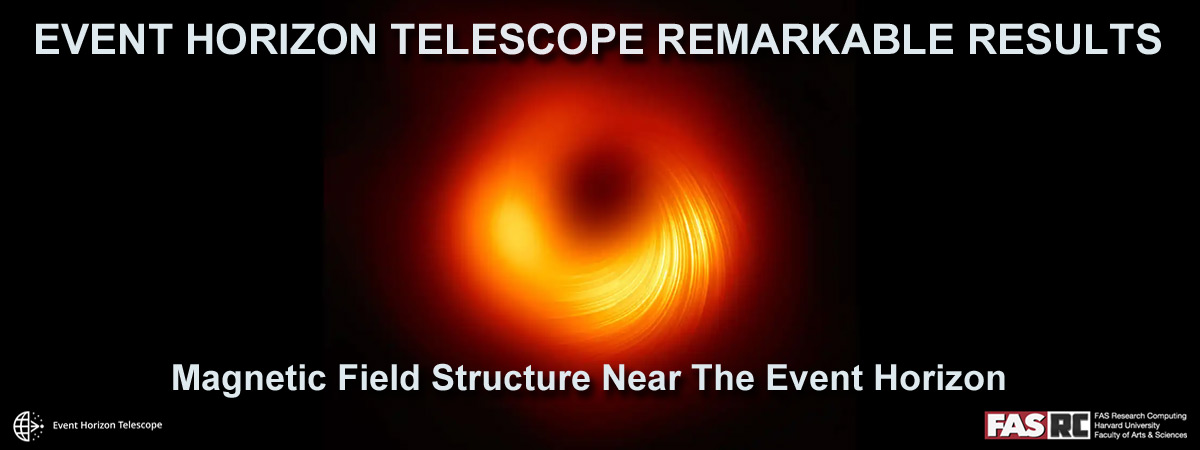

- If you have a 2021/2021 publication that made use of the FASRC cluster and you don't find it listed here, please do let us know: https://www.rc.fas.harvard.edu/cluster/publications/

GENERAL MAINTENANCE

-

- NCF VDI/OoD - Retire old Jupyter/Jupyterlab apps

- Audience: NCF VID/OpenOnDemand

- Impact: Removes old apps, existing/new apps not affected.

- hsphs10 fileserver will be decommissioned on October 1st

- Audience: Users of hsphs10

- Impact: Tenants have been notified, so no expected impact

- Boston and Holyoke Isilon upgrades

- Audience: Isilon (Tier1) shares in both data canters

- Impact: None expected. Upgrades should roll across redundant nodes with no downtime

- I

QSS SID beta deploymentDeferredAudience: Beta testersImpact: None

- www.rc.fas.harvard.edu and docs.rc.fas.harvard.edu upgrades

- Audience: Visitors to either website

- Impact: Both sites will be down for approximately 10 minutes each

- Login node and VDI node reboots

- Audience: Anyone logged into a a login node or VDI/OOD node

- Impact: Login and VDI/OOD nodes will be unavailable while updating and rebooting

- Scratch cleanup ( https://docs.rc.fas.harvard.edu/kb/policy-scratch/ )

- Audience: Cluster users

- Impact: Files older than 90 days will be removed.

- Reminder: Scratch 90-day file retention purging runs occur regularly not just during maintenance periods.

Thanks,

FAS Research Computing

https://www.rc.fas.harvard.edu

https://docs.rc.fas.harvard.edu

https://status.rc.fas.harvard.eduReminder: Scratch 90-day file retention purging runs occur regularly not just during maintenance periods.

- NCF VDI/OoD - Retire old Jupyter/Jupyterlab apps